Note: What I present below is scarcely believable to me. I have looked for an error in my analysis, but cannot find one. Nevertheless, extraordinary claims require extraordinary evidence, so let the following be an introduction to a potential issue with current carbon cycle models that might well be easily resolved by others with more experience and insight than I possess.

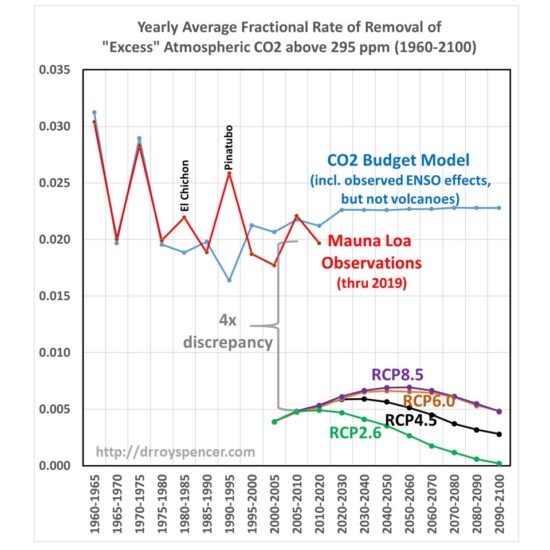

UPDATE (2/6/2020): It turns out I made an error (as I feared) in my calculations that went into Fig. 1, below. You might want to instead read my corrected results here and what they suggest. The bottom line is that the IPCC carbon cycle models by 2100 reduce the fractional rate of removal of extra atmospheric CO2 by a factor of 3-4 versus what has actually been happening over the last 60 years of Mauna Loa CO2 data. That (as Fig. 2 in my previous post suggested) will have an effect on future CO2 projections and, in turn, global warming forecasts. But my previous claim that the discrepancy exists during the Mauna Loa record was incorrect.

Summary

Sixty years of Mauna Loa CO2 data compared to yearly estimates of anthropogenic CO2 emissions shows that Mother Nature has been removing 2.3%/year of the “anthropogenic excess” of atmospheric CO2 above a baseline of 295 ppm. When similar calculations are done for the RCP (Representative Concentration Pathway) projections of anthropogenic emissons and CO2 concentrations it is found that the carbon cycle models those projections are based upon remove excess CO2 at only 1/4th the observed rate. If these results are anywhere near accurate, the future RCP projections of CO2, as well as the resulting climate model projection of resulting warming, are probably biased high.

Introduction

My previous post from a few days ago showed the performance of a simple CO2 budget model that, when forced with estimates of yearly anthropogenic emissions, very closely matches the yearly average Mauna Loa CO2 observations during 1959-2019. I assume that a comparable level of agreement is a necessary condition of any model that is relied upon to predict future levels of atmospheric CO2 if it is have any hope of making useful predictions of climate change.

In that post I forced the model with EIA projections of future emissions (0.6%/yr growth until 2050) and compared it to the RCP (Representative Concentration Pathway) scenarios used for forcing the IPCC climate models. I concluded that we might never reach a doubling of atmospheric CO2 (2XCO2).

But what I did not address was the relative influence on those results of (1) assumed future anthropogenic CO2 emissions versus (2) how fast nature removes excess CO2 from the atmosphere. Most critiques of the RCP scenarios address the former, but not the latter. Both are needed to produce an RCP scenario.

I implied that the RCP scenarios from models did not remove CO2 fast enough, but I did not actually demonstrate it. That is the subject of this short article.

What Should the Atmospheric CO2 Removal Rate be Compared To?

The Earth’s surface naturally absorbs from, and emits into, the huge atmospheric reservoir of CO2 through a variety of biological and geochemical processes.

We can make the simple analogy to a giant vat of water (the atmospheric reservoir of CO2), with a faucet pouring water into the vat and a drain letting water out of the vat. Let’s assume those rates of water gain and loss are nearly equal, in which case the level of water in the vat (the CO2 content of the atmosphere) never changes very much. This was supposedly the natural state of CO2 flows in and out of the atmosphere before the Industrial Revolution, and is an assumption I will make for the purposes of this analysis.

Now let’s add another faucet that drips water into the vat very slowly, over many years, analogous to human emissions of CO2. I think you can see that there must be some change in the removal rate from the drain to offset the extra gain of water, otherwise the water level will rise at the same rate that the additional water is dripping into the vat. It is well known that atmospheric CO2 is rising at only about 50% the rate at which we produce CO2, indicating the “drain” is indeed flowing more strongly.

Note that I don’t really care if 5% or 50% of the water in the vat is exchanged every year through the actions of the main faucet and the drain; I want to know how much faster the drain will accomodate the extra water being put into the tank, limiting the rise of water in the vat. This is also why any arguments [and models] based upon atomic bomb C-14 removal rates are, in my opinion, not very relevant. Those are useful for determining the average rate at which carbon cycles through the atmospheric reservoir, but not for determining how fast the extra ‘overburden’ of CO2 will be removed. For that, we need to know how the biological and geochemical processes change in response to more atmospheric CO2 than they have been used to in centuries past.

The CO2 Removal Fraction vs. Emissions Is Not a Useful Metric

For many years I have seen reference to the average equivalent fraction of excess CO2 that is removed by nature, and I have often (incorrectly) said something similar to this: “about 50% of yearly anthropogenic CO2 emissions do not show up in the atmosphere, because they are absorbed.” I believe this was discussed in the very first IPCC report, FAR. I’ve used that 50% removal fraction myself, many times, to describe how nature removes excess CO2 from the atmosphere.

Recently I realized this is not a very useful metric, and as phrased above is factually incorrect and misleading. In fact, it’s not 50% of the yearly anthropogenic emissions that is absorbed; it’s an amount that is equivalent to 50% of emissions. You see, Mother Nature does not know how much CO2 humanity produces every year; all she knows is the total amount in the atmosphere, and that’s what the biosphere and various geochemical processes respond to.

It’s easy to demonstrate that the removal fraction, as is usually stated, is not very useful. Let’s say humanity cut its CO2 emissions by 50% in a single year, from 100 units to 50 units. If nature had previously been removing about 50 units per year (50 removed versus 100 produced is a 50% removal rate), it would continue to remove very close to 50 units because the atmospheric concentration hasn’t really changed in only one year. The result would be that the new removal fraction would shoot up from 50% to 100%.

Clearly, that change to a 100% removal fraction had nothing to do with an enhanced rate of removal of CO2; it’s entirely because we made the removal rate relative to the wrong variable: yearly anthropogenic emissions. It should be referenced instead to how much “extra” CO2 resides in the atmosphere.

The “Atmospheric Excess” CO2 Removal Rate

The CO2 budget model I described here and here removes atmospheric CO2 at a rate proportional to how high the CO2 concentration is above a background level nature is trying to “relax” to, a reasonable physical expectation that is supported by observational data.

Based upon my analysis of the Mauna Loa CO2 data versus the Boden et al. (2017) estimates of global CO2 emissions, that removal rate is 2.3%/yr of the atmospheric excess above 295 ppm. That simple relationship provides an exceedingly close match to the long-term changes in Mauna Loa yearly CO2 observations, 1959-2019 (I also include the average effects of El Nino and La Nina in the CO2 budget model).

So, the question arises, how does this CO2 removal rate compare to the RCP scenarios used as input to the IPCC climate models? The answer is shown in Fig. 1, where I have computed the yearly average CO2 removal rate from Mauna Loa data, and the simple CO2 budget model in the same way as I did from the RCP scenarios. Since the RCP data I obtained from the source has emissions and CO2 concentrations every 5 (or 10) years from 2000 onward, I computed the yearly average removal rates using those bounding years from both observations and from models.

The four RCP scenarios do indeed have an increasing rate of removal as atmospheric CO2 concentrations rise during the century, but their average rates of removal are much too low. Amazingly, there appears to be about a factor of four discrepancy between the CO2 removal rate deduced from the Mauna Loa data (combined with estimates of historical CO2 emissions) versus the removal rate in the carbon cycle models used for the RCP scenarios during their overlap period, 2000-2019.

Such a large discrepancy seems scarcely believable, but I have checked and re-checked my calculations, which are rather simple: they depend only upon the atmospheric CO2 concentrations, and yearly CO2 emissions, in two bounding years. Since I am not well read in this field, if I have overlooked some basic issue or ignored some previous work on this specific subject, I apologize.

Recomputing the RCP Scenarios with the 2.3%/yr CO2 Removal Rate

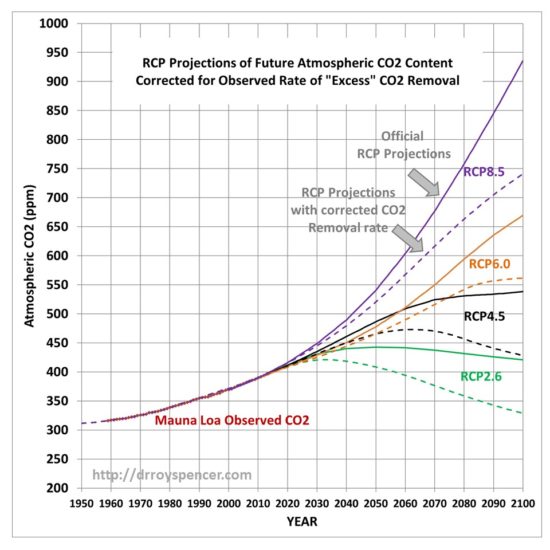

This raises the question of what the RCP scenarios of future atmospheric CO2 content would look like if their assumed emissions projections were combined with the Manua Loa-corrected excess CO2 removal rate of 2.3%/yr (above an assumed background value of 295 ppm). Those results are shown in Fig. 2.

Now we can see the effect of just the differences in the carbon cycle models on the RCP scenarios: those full-blown models that try to address all of the individual components of the carbon cycle and how it changes as CO2 concentrations rise, versus my simple (but Mauna Loa data-supportive) model that only deals with the empirical observation that nature removes excess CO2 at a rate of 2.3%/yr of the atmospheric excess above 295 ppm.

This is an aspect of the RCP scenario discussion I seldom see mentioned: The realism of the RCP scenarios is not just a matter of what future CO2 emissions they assume, but also of the carbon cycle model which removes excess CO2 from the atmosphere.

Discussion

I will admit to knowing very little about the carbon cycle models used by the IPCC. I’m sure they are very complex (although I dare say not as complex as Mother Nature) and represent the state-of-the-art in trying to describe all of the various processes that control the huge natural flows of CO2 in and out of the atmosphere.

But uncertainties abound in science, especially where life (e.g. photosynthesis) is involved, and these carbon cycle models are built with the same philosophy as the climate models which use the output from the carbon cycle models: These models are built on the assumption that all of the processes (and their many approximations and parameterizations) which produce a reasonably balanced *average* carbon cycle picture (or *average* climate state) will then accurately predict what will happen when that average state changes (increasing CO2 and warming).

That is not a given.

Sometimes it is useful to step back and take a big-picture approach: What are the CO2 observations telling us about how the global average Earth system is responding to more atmospheric CO2? That is what I have done here, and it seems like a model match to such a basic metric (how fast is nature removing excess CO2 from the atmosphere, as the CO2 concentration rises) would be a basic and necessary test of those models.

According to Fig. 1, the carbon cycle models do not match what nature is telling us. And according to Fig. 2, it makes a big difference to the RCP scenarios of future CO2 concentrations in the atmosphere, which will in turn impact future projections of climate change.

Home/Blog

Home/Blog