NOTE: I have written on this subject before, but it is important enough that we need to keep thinking about it. It is also related to the forcing-feedback paradigm of climate change, which I usually defend — but which I will here take a skeptical view toward in the context of long-term climate change.

The UN IPCC scientists who write the reports which guide international energy policy on fossil fuel use operate under the assumption that the climate system has a preferred, natural and constant average state which is only deviated from through the meddling of humans. They construct their climate models so that the models do not produce any warming or cooling unless they are forced to through increasing anthropogenic greenhouse gases, aerosols, or volcanic eruptions.

This imposed behavior of their “control runs” is admittedly necessary because various physical processes in the models are not known well enough from observations and first principles, and so the models must be tinkered with until they produce what might be considered to be the “null hypothesis” behavior, which in their worldview means no long-term warming or cooling.

What I’d like to discuss here is NOT whether there are other ‘external’ forcing agents of climate change, such as the sun. That is a valuable discussion, but not what I’m going to address. I’d like to address the question of whether there really is an average state that the climate system is constantly re-adjusting itself toward, even if it is constantly nudged in different directions by the sun.

If there is such a preferred average state, then the forcing-feedback paradigm of climate change is valid. In that system of thought, any departure of the global average temperature from the Nature-preferred state is resisted by radiative “feedback”, that is, changes in the radiative energy balance of the Earth in response to the too-warm or too-cool conditions. Those radiative changes would constantly be pushing the system back to its preferred temperature state.

But what if there isn’t only one preferred state?

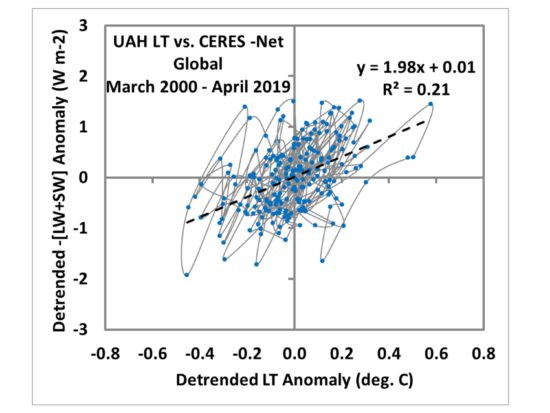

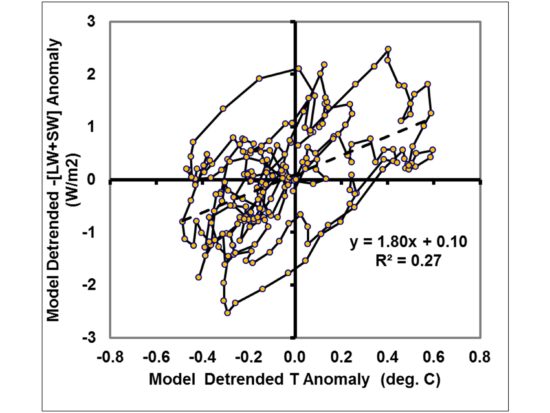

I am of the opinion that the F-F paradigm does indeed apply for at least year-to-year fluctuations, because phase space diagrams of the co-variations between temperature and radiative flux look just like what we would expect from a F-F perspective. I touched on this in yesterday’s post.

Where the F-F paradigm might be inapplicable is in the context of long-term climate changes which are the result of internal fluctuations.

Chaos in the Climate System

Everyone agrees that the ocean-atmosphere fluid flows represent a non-linear dynamical system. Such systems, although deterministic (that is, can be described with known physical equations) are difficult to predict the future behavior of because of their sensitive dependence on the current state. This is called “sensitive dependence on initial conditions”, and it is why weather cannot be forecast more than a week or so in advance.

The reason why most climate researchers do not think this is important for climate forecasting is that they are dealing with how the future climate might differ from today’s climate in a time-averaged sense... due not to changes in initial conditions, but in the “boundary conditions”, that is, increasing CO2 in the atmosphere. Humans are slightly changing the rules by which the climate system operates — that is, the estimated ~1-2% change in the rate of cooling of the climate system to outer space as a result of increasing CO2.

There are still chaotic variations in the climate system, which is why any given climate model forced with the same amount of increasing CO2 but initialized with different initial conditions in 1760 will produce a different globally-averaged temperature in, say, 2050 or 2060.

But what if the climate system undergoes its own, substantial chaotic changes on long time scales, say 100 to 1,000 years? The IPCC assumes this does not happen. But the ocean has inherently long time scales — decades to millennia. An unusually large amount of cold bottom water formed at the surface in the Arctic in one century might take hundreds or even thousands of years before it re-emerges at the surface, say in the tropics. This time lag can introduce a wide range of complex behaviors in the climate system, and is capable of producing climate change all by itself.

Even the sun, which we view as a constantly burning ball of gas, produces an 11-year cycle in sunspot activity, and even that cycle changes in strength over hundreds of years. It would seem that every process in nature organizes itself on preferred time scales, with some amount of cyclic behavior.

This chaotic climate change behavior would impact the validity of the forcing-feedback paradigm as well as our ability to determine future climate states and the sensitivity of the climate system to increasing CO2. If the climate system has different, but stable and energy-balanced, states, it could mean that climate change is too complex to predict with any useful level of accuracy.

El Nino / La Nina as an Example of a Chaotic Cycle

Most climate researchers view the warm El Nino and cool La Nina episodes conceptually as departures from an average climate state. But I believe that they are more accurately viewed as a bifurcation in the chaotic climate system. In other words, during Northern Hemisphere winter, there are two different climate states (El Nino or La Nina) that the climate system tends toward. Each has its own relatively stable configuration of Pacific trade winds, sea surface temperature patterns, cloudiness, and global-average temperature.

So, in a sense, El Nino and La Nina are different climate states which Earth has difficulty choosing between each year. One is a globally warm state, the other globally cool. This chaotic “bifurcation” behavior has been described in the context of even extremely simple systems of nonlinear equations, vastly simpler than the equations describing the time-evolving real climate system.

The Medieval Warm Period and Little Ice Age

Most historical records and temperature proxy evidence point to the Medieval Warm Period and Little Ice Age as real, historical events. I know that most people try to explain these events as the response to some sort of external forcing agent, say indirect solar effects from long-term changes in sunspot activity. This is a natural human tendency… we see a change, and we assume there must be a cause external to the change.

But a nonlinear dynamical system needs no external forcing to experience change. I’m not saying that the MWP and LIA were not externally forced, only that their explanation does not necessarily require external forcing.

There could be internal modes of chaotic fluctuations in the ocean circulation which produce their own stable climate states which differ in global-average temperature by, say, 1 deg. C. One possibility is that they would have slightly different sea surface temperature patterns or oceanic wind speeds, which can cause slightly different average cloud amounts, thus altering the planetary albedo and so the amount of sunlight the climate system has to work with. Or, the precipitation systems produced by the different climate states could have slightly different precipitation efficiencies, which then would affect the average amount of the atmosphere’s main greenhouse gas, water vapor.

Chaotic Climate Change and the Forcing-Feedback Paradigm

If the climate system has multiple, stable climate states, each with its own set of slightly different energy flows that still produce global energy balance and relatively constant temperatures (whether warmer or cooler), then the “forcing-feedback framework” (FFF, as my Australian friend Christopher Game likes to call it) would not apply to these climate variations, because there is no normal, average climate state to which ‘feedback’ is constantly nudging the system back toward.

Part of the reason for this post is the ongoing discussion I have had over the years with Christopher on this issue, and I want him to know that I am not totally deaf to his concerns about the FFF. As I described yesterday, we do see forcing-feedback type behavior in short-term climate fluctuations, but I agree that the FFF might not be applicable to longer-term fluctuations. In this sense, I believe Christopher Game is correct.

The UN IPCC Will Not Address This Issue

It is clear that the UN IPCC, by its very charter, is primarily focused on human-caused climate change. As a result of political influence (related to the desire of governmental regulation over the private sector) it will never seriously address the possibility that long-term climate change might be part of nature. Only those scientists who are supportive of this anthropocentric climate view are allowed to play in the IPCC sandbox.

Substantial chaos in the climate system injects a large component of uncertainty into all predictions of future climate change, including our ability to determine climate sensitivity. It reduces the practical value of climate modelling efforts, which cost billions of dollars and support the careers of thousands of researchers. While I am generally supportive of climate modeling, I am appropriately skeptical of the ability of current climate models to provide enough confidence to make high-cost energy policy decisions.

Home/Blog

Home/Blog