NOTE: This post has undergone a few revisions as I try to be more precise in my wording. The latest revision was at 0900 CDT Sept. 12, 2019.

If this post is re-posted elsewhere, I ask that the above time stamp be included.

Yesterday I posted an extended and critical analysis of Dr. Pat Frank’s recent publication entitled Propagation of Error and the Reliability of Global Air Temperature Projections. Dr. Frank graciously provided rebuttals to my points, none of which have changed my mind on the matter. I have made it clear that I don’t trust climate models’ long-term forecasts, but that is for different reasons than Pat provides in his paper.

What follows is the crux of my main problem with the paper, which I have distilled to its essence, below. I have avoided my previous mistake of paraphrasing Pat, and instead I will quote his conclusions verbatim.

In his Conclusions section, Pat states “As noted above, a GCM simulation can be in perfect external energy balance at the TOA while still expressing an incorrect internal climate energy-state.”

This I agree with, and I believe climate modelers have admitted to this as well.

But, he then further states, “LWCF [longwave cloud forcing] calibration error is +/- 144 x larger than the annual average increase in GHG forcing. This fact alone makes any possible global effect of anthropogenic CO2 emissions invisible to present climate models.”

While I agree with the first sentence, I thoroughly disagree with the second. Together, they represent a non sequitur. All of the models show the effect of anthropogenic CO2 emissions, despite known errors in components of their energy fluxes (such as clouds)!

Why?

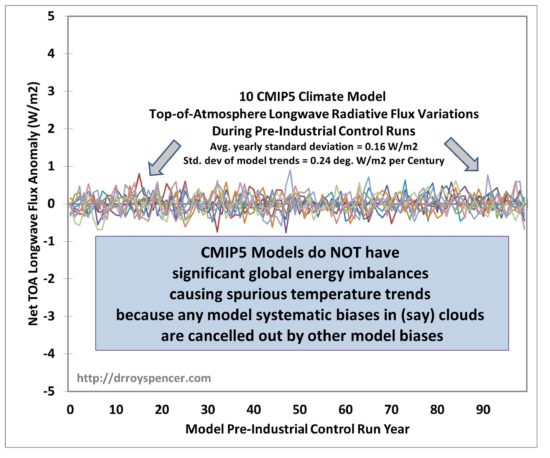

If a model has been forced to be in global energy balance, then energy flux component biases have been cancelled out, as evidenced by the control runs of the various climate models in their LW (longwave infrared) behavior:

Importantly, this forced-balancing of the global energy budget is not done at every model time step, or every year, or every 10 years. If that was the case, I would agree with Dr. Frank that the models are useless, and for the reason he gives. Instead, it is done once, for the average behavior of the model over multi-century pre-industrial control runs, like those in Fig. 1.

The ~20 different models from around the world cover a WIDE variety of errors in the component energy fluxes, as Dr. Frank shows in his paper, yet they all basically behave the same in their temperature projections for the same (1) climate sensitivity and (2) rate of ocean heat uptake in response to anthropogenic greenhouse gas emissions.

Thus, the models themselves demonstrate that their global warming forecasts do not depend upon those bias errors in the components of the energy fluxes (such as global cloud cover) as claimed by Dr. Frank (above).

That’s partly why different modeling groups around the world build their own climate models: so they can test the impact of different assumptions on the models’ temperature forecasts.

Statistical modelling assumptions and error analysis do not change this fact. A climate model (like a weather forecast model) has time-dependent differential equations covering dynamics, thermodynamics, radiation, and energy conversion processes. There are physical constraints in these models that lead to internally compensating behaviors. There is no way to represent this behavior with a simple statistical analysis.

Again, I am not defending current climate models’ projections of future temperatures. I’m saying that errors in those projections are not due to what Dr. Frank has presented. They are primarily due to the processes controlling climate sensitivity (and the rate of ocean heat uptake). And climate sensitivity, in turn, is a function of (for example) how clouds change with warming, and apparently not a function of errors in a particular model’s average cloud amount, as Dr. Frank claims.

The similar behavior of the wide variety of different models with differing errors is proof of that. They all respond to increasing greenhouse gases, contrary to the claims of the paper.

The above represents the crux of my main objection to Dr. Frank’s paper. I have quoted his conclusions, and explained why I disagree. If he wishes to dispute my reasoning, I would request that he, in turn, quote what I have said above and why he disagrees with me.

Home/Blog

Home/Blog